Don't Teach Your Agents Karate

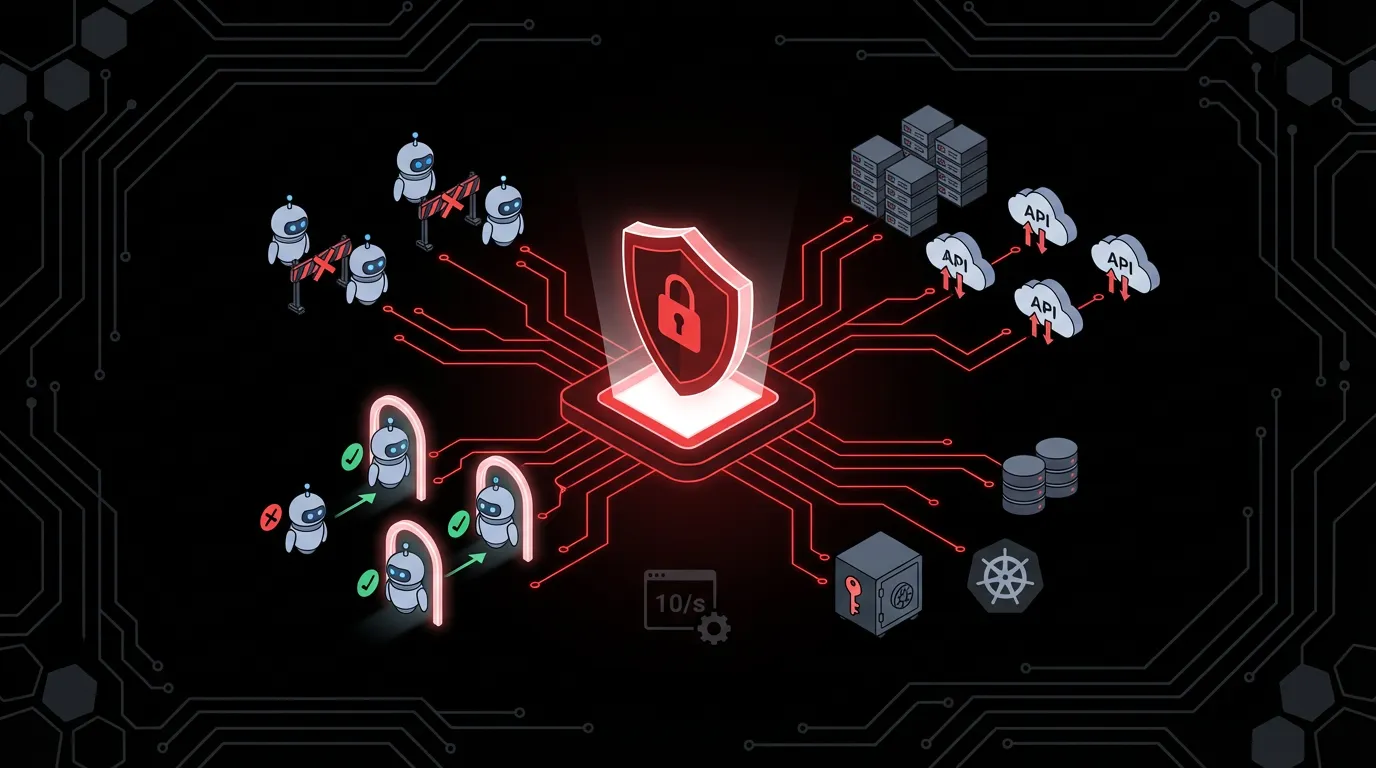

Every agent team rebuilds the same auth, rate limiting, and credential management. I built an agent gateway for Kubernetes that generates it all from two CRDs so the people building agents never touch security and the people running the platform never touch agent code.

I keep watching the same pattern repeat across teams building agents on Kubernetes.

A team builds an agent. It calls external APIs, talks to other agents, maybe exposes MCP tools. At some point someone asks, “Who can call this thing? How do we rate limit it? How do we make sure it doesn’t leak the OpenAI API key?” And then the team spends two weeks building auth middleware they’ll never maintain properly.

Multiply that by every agent team in the org and you get a mess. Inconsistent token validation. Hardcoded API keys in environment variables. Rate limiting that exists in some agents and not others. No central visibility into who can call what.

This is the part of agent development that nobody wants to do, and everybody does badly. So I built agent-access-control — an agent gateway for Kubernetes that makes security a platform problem instead of an application problem.

The numbers are worse than you think

Gravitee surveyed 900+ practitioners for their State of AI Agent Security report. Some findings that stuck with me:

- 45.6% of teams still use shared API keys for agent-to-agent auth.

- 27.2% have resorted to custom, hardcoded logic for authorization.

- Only 14.4% report that all agents went live with full security approval.

That last one is telling. 80.9% of teams have moved past planning into active testing or production. But barely a seventh of them have security’s blessing. The agents are out there. The controls aren’t.

OWASP released their Agentic AI Top 10 in December 2025. Three of the top four risks center on identity, tool access, and delegated trust. Their new concept of “least agency” is the principle of least privilege applied to autonomous systems. An agent should have the minimum autonomy, tool access, and credential scope needed for its task. Nothing more.

In practice, most agents hold 10x more privileges than required. SaaS defaults to “read all files” when only a folder is needed. Faster for developers, but it means a compromised agent gets everything.

What I kept running into

I work on Kubernetes-based AI infrastructure. Across different projects, I kept hitting the same three problems:

Inbound access control. Agent A calls Agent B. But who checks that Agent A is allowed? Each agent team builds its own JWT validation, its own allowlists. Or worse, they don’t. They assume the network is safe because it’s inside the cluster. (It’s not.)

Rate limiting. An orchestrator agent fires off 200 requests per second to a downstream agent during a batch job. The downstream agent falls over. Nobody set limits because “we’ll add that later.” Later never comes.

Outbound credential management. The agent needs to call the GitHub API with an OAuth token. It needs to call OpenWeatherMap with an API key. Those credentials get baked into ConfigMaps, mounted as environment variables, and forgotten. The agent holds the credential directly. If the agent is compromised, so is the credential.

Every team solves these problems on their own. And every team solves them just enough to ship, then moves on.

What if the platform handled it?

The idea is pretty direct: take those three concerns out of the agent entirely and push them into the platform.

I built a Kubernetes operator called Agent Access Control. It wires together Gateway API, the MCP Gateway, and Kuadrant into something that acts as an agent gateway, driven by two CRDs.

The first CRD is AgentCard. It represents an agent’s identity: what protocols it speaks (A2A, MCP, REST), what skills it offers, what port it listens on. A discovery controller creates these automatically by probing running agents for their /.well-known/agent.json or MCP tools/list endpoint. The person building the agent never writes a CRD. They add a label to the Deployment manifest, commit to git, and GitOps handles the rest.

The second CRD is AgentPolicy. This is where the platform team defines access rules. It selects agents by label and declares:

- Who can call this agent (by Kubernetes ServiceAccount, so identity is cryptographic, not a string someone typed)

- What this agent can call (other agents, also by ServiceAccount)

- What external APIs it can reach, and how credentials are handled (Vault, token exchange, passthrough, or deny)

- How many requests per minute it accepts

From those two resources, the controller generates six types of Kubernetes objects:

| Generated Resource | What It Does | Who Enforces |

|---|---|---|

| HTTPRoute | Routes traffic through the gateway | Gateway API |

| AuthPolicy | JWT validation + ServiceAccount-based access control | Authorino (Kuadrant) |

| RateLimitPolicy | Per-agent rate limits with centralized counters | Limitador (Kuadrant) |

| NetworkPolicy | Deny-all egress, allow DNS + gateway only | Kubernetes |

| ConfigMap | Per-host credential injection and routing | Auth sidecar |

| MCPServerRegistration | Registers MCP tools for federation | MCP Gateway |

The agent contains zero auth code. You commit a labeled Deployment to git, GitOps deploys it, and the platform handles security.

How the pieces fit

Say someone commits a weather agent with the kagenti label. GitOps deploys it. The discovery controller finds it, reads its capabilities, and creates:

apiVersion: kagenti.com/v1alpha1

kind: AgentCard

metadata:

name: weather-agent

labels:

tier: standard

spec:

description: "Weather forecasts and alerts"

protocols: [a2a, rest]

skills:

- name: get-forecast

- name: weather-alerts

servicePort: 8080The Platform Engineer has a policy that covers all tier: standard agents:

apiVersion: kagenti.com/v1alpha1

kind: AgentPolicy

metadata:

name: standard-tier

spec:

agentSelector:

matchLabels:

tier: standard

ingress:

allowedAgents: [orchestrator, planner]

external:

defaultMode: deny

rules:

- host: api.openweathermap.org

mode: vault

vaultPath: secret/data/openweathermap

header: x-api-key

- host: weather.gov

mode: passthrough

rateLimit:

requestsPerMinute: 60The controller reconciles. An HTTPRoute goes up that exposes the agent through the gateway on /agents/weather-agent. Authorino gets an AuthPolicy scoped to system:serviceaccount:default:orchestrator and system:serviceaccount:default:planner — if you’re not one of those two ServiceAccounts, you’re not getting in. Limitador gets a 60 req/min rate limit. A NetworkPolicy locks down the pod’s egress to DNS and the gateway only. And the sidecar gets a ConfigMap telling it how to handle each external host.

When I check the HTTPRoute status on the cluster:

kuadrant.io/AuthPolicyAffected: True

kuadrant.io/RateLimitPolicyAffected: TrueAuthorino and Limitador are actively enforcing. Not configured. Enforcing. On a live cluster, right now.

The outbound side matters more than people think

Everyone focuses on inbound auth. “Who can call my agent?” That’s important but it’s the easier half.

The harder question is: “What can my agent call, and with what credentials?”

CyberArk wrote about this in their 2026 agent security market analysis. Credential velocity is outpacing governance. Every new agent, workflow, or integration can mint credentials in minutes. Most organizations still track that in spreadsheets.

Egress enforcement needs two layers. When defaultMode is deny, the operator generates a Kubernetes NetworkPolicy that blocks all egress from the agent pod except DNS and the cluster gateway. That’s kernel-level. The pod can’t bypass it even if the process inside tries to make direct outbound connections. But NetworkPolicy only works with IP CIDRs, not hostnames, so it can’t do per-host credential injection. That’s where the sidecar comes in — it handles the hostname routing and injects the right credential for each host. You need both. Without the NetworkPolicy, a rogue process in the pod can bypass the sidecar. Without the sidecar, you have no way to inject Vault secrets per destination.

The sidecar config for our weather agent looks like:

gateway:

host: agent-gateway.agent-demo.svc.cluster.local

mode: passthrough

allowedAgents: [summarizer, classifier]

external:

defaultMode: deny

rules:

- host: api.openweathermap.org

mode: vault

vaultPath: secret/data/openweathermap

header: x-api-key

- host: weather.gov

mode: passthroughThe agent makes a regular HTTP call to api.openweathermap.org. The sidecar intercepts it, fetches the API key from Vault, injects it into the x-api-key header, and forwards. The agent never sees the credential. If the agent is compromised, the attacker gets an HTTP client that can call two whitelisted hosts. They don’t get the API key. They can’t call anything else because the default mode is deny.

Compare that to an environment variable containing the raw API key that the agent reads directly. A compromised agent with that pattern gets the credential itself, forever, until someone rotates it manually.

MCP Gateway integration

If an agent speaks MCP, the controller also creates an MCPServerRegistration. This registers the agent’s tools with the MCP Gateway, which aggregates tools from multiple MCP servers behind a single /mcp endpoint. A router parses the tool name prefix and sends each call to the right backend.

Tool access is tier-based. Standard agents don’t get MCP tools. Premium agents do, through a virtualServerRef in their policy:

mcpTools:

virtualServerRef: premium-tools-vsThe MCP Gateway CRD isn’t installed on every cluster. The controller checks for it and skips if missing. It logs a message and moves on. No crash, just “MCP not available, skipping.”

This is why I think of it as an agent gateway and not just a security controller. User-to-agent calls go through the gateway. Agent-to-agent calls go through the gateway. MCP tool calls go through the MCP Gateway. Outbound API calls go through the sidecar. There’s no path that bypasses enforcement.

Lifecycle management

Everything the controller generates has an owner reference pointing back to the source CRD. Delete an AgentCard and the HTTPRoute cascades. Delete an AgentPolicy and the AuthPolicy, RateLimitPolicy, NetworkPolicy, and ConfigMap all cascade-delete automatically. Recreate the AgentCard and the controller regenerates everything within seconds.

This matters when agents are ephemeral. A scale-to-zero agent that wakes up on demand gets its full security posture reconstructed as part of the reconciliation loop. No manual cleanup, no orphaned resources.

Who does what

The person building the agent adds kagenti.com/agent: true to the Deployment manifest and commits to git. They never write a CRD, never run kubectl, never configure a gateway. GitOps deploys. The discovery controller picks up the agent. Done.

The person running the platform writes one AgentPolicy per tier and commits it to the platform repo. standard-tier covers all standard agents. premium-tier covers the ones that need higher rate limits, MCP tool access, or token exchange for OAuth2 APIs. New agents inherit the matching policy automatically.

The two never need to coordinate on security. The agent developer doesn’t know how auth works. The platform engineer doesn’t know what the agent does. That’s the point.

What this doesn’t do

The operator generates configuration. It doesn’t enforce anything itself.

Authorino validates JWTs at the gateway. Limitador counts requests. The sidecar intercepts outbound calls. NetworkPolicy blocks egress at the kernel. MCP Gateway routes tool calls. Each of those existed before this project.

What didn’t exist was a way to configure all of them from one place. Without this operator, every agent needs its own HTTPRoute, AuthPolicy, RateLimitPolicy, NetworkPolicy, ConfigMap — and someone has to keep them consistent. With it, you write one AgentPolicy per tier. New agents inherit the matching policy through labels. Every agent in a tier gets the same security posture without anyone manually wiring it up.

There’s a fair question about whether this abstraction is worth it — why not let the platform engineer write the underlying resources directly? For a handful of agents, maybe that’s fine. For 20+ agents across multiple tiers with consistent credential strategies, the math changes.

Build-time vs runtime

I also built Agent Registry, which solves a different problem at a different stage. The registry does static discovery — an LLM reads your source code and identifies dependencies, tools, models, and skills. It generates a bill of materials and tracks promotion from draft to published. That’s build-time governance.

Agent Access Control is runtime. It probes live endpoints, discovers what’s running, and enforces what each agent can actually reach. The registry knows what an agent depends on. The operator controls what it’s allowed to do with those dependencies once it’s running.

Try it

The controller runs on any Kubernetes cluster with Gateway API. Kuadrant is optional (for auth and rate limiting). The MCP Gateway is optional (for tool federation). Without them, the controller still generates HTTPRoutes and sidecar ConfigMaps.

git clone https://github.com/agentoperations/agent-access-control.git

cd agent-access-control

make install # CRDs

make deploy # Controller + RBAC

kubectl apply -f config/samples/There’s a narrated demo showing the full lifecycle on a live OpenShift cluster, and a second demo that walks through the three layers of enforcement.

There’s also a slide deck that walks through the architecture, personas, and MCP Gateway integration.

The code is Apache 2.0 on GitHub. The APIs are pre-alpha so expect breakage. But the pattern — making the platform responsible for agent security so the people building agents don’t have to be — is what I think is worth talking about.

Agents should fetch weather forecasts and review pull requests. Not validate JWTs.